Getting Started with TensorFlow for Machine Learning

These days, a lot of people are pretty excited about Machine Learning (ML). This is interesting, because the field has been around for a really long time. The term ‘Machine Learning’ itself was coined at IBM in 1959, and the field evolved as a subset of Artificial Intelligence.

Machine Learning was defined at the time as a way to give "computers the ability to learn without being explicitly programmed." More accurately, and more recently, the "learning" aspect of ML can be described thus: "A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P if its performance at tasks in T, as measured by P, improves with experience E." (Tom Mitchell, 1997). In a nutshell, by watching the performance of a computer program as it iterates, one can quantify its improvement and thus state that it is learning (or not). This is very interesting to programmers who want to create applications that can respond to human users with ever-more-useful reactions.

It’s only recently, however, with our new powerful processors readily available even to DIY hobbyists, that ML is experiencing a sort of renaissance, or even a ‘golden age’. New tools are being introduced at a rapid rate as the field evolves and more diverse users look to become involved.

Enter TensorFlow, one of Google’s most interesting contributions to the field. In this article, I’ll provide an introduction to TensorFlow, providing some paths to getting started using it to train your own ML models and giving some suggestions on how to further your education in this fast-moving field.

What is TensorFlow

TensorFlow is an open-source software library to facilitate ML to build and train systems, in particular neural networks, similar to the ways that humans use reasoning and observation to learn. Google itself uses TensorFlow for some of its best-known software including Google Translate.

Originally, the system prior to TensorFlow (called DistBelief, introduced in 2011) was closed-source and built only for internal use, but TensorFlow was built by Google Brain (an internal company of Alphabet, Google’s parent company) as DistBelief's simplified, refactored replacement in 2015. Originally closed-source, it was open-sourced in 2017. Its use has grown exponentially this year with fresh libraries based on TensorFlow appearing regularly. Most pertinent to the mobile developer is the recent introduction of TensorFlow mobile - libraries to be used for iOS and Android. Exciting days for a burgeoning field!

TensorFlow is not only a library, it is also a set of APIs. This is convenient, because oftentimes a data scientist or programmer doesn’t want to hand-code low-level algorithms each time they must be used in a codebase. Instead, we are now given a choice. With TensorFlow Core, the lowest-level API, the programmer/scientist has full control over the models built. The higher-level APIs, most useful to the web and mobile programmer, however, are built on top of TensorFlow Core and abstract away some of its complexity.

Example: an example of the way TensorFlow abstracts away the math inherent in machine learning would be a line such as

train_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy). In one line, we tell TensorFlow to minimize cross entropy (a measure of the efficiency of our calculations) by using the gradient descent algorithm with a learning rate of 0.5.

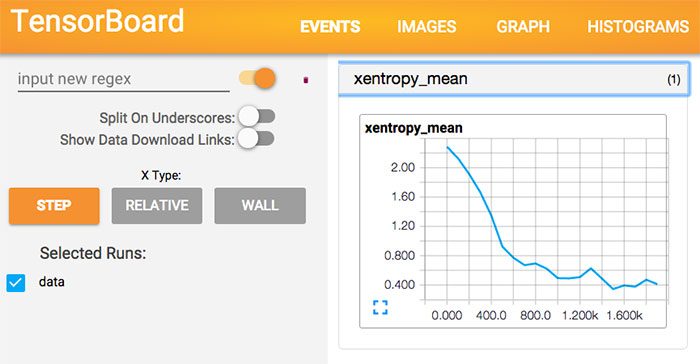

In addition, TensorFlow offers a visual learning tool called TensorBoard which provides visualizations in real time of your machine learning work.

What is a Tensor?

TensorFlow is named after the mathematical object called a tensor, which is a geometric object that describes linear relations between geometric vectors, scalars, and other tensors. Given a reference basis of vectors, a tensor can be represented as an organized multidimensional array of numerical values.

Put more simply, "a 'tensor' is like a matrix but with an arbitrary number of dimensions. A 1-dimensional tensor is a vector. A 2-dimensions tensor is a matrix. And then you can have tensors with 3, 4, 5 or more dimensions."

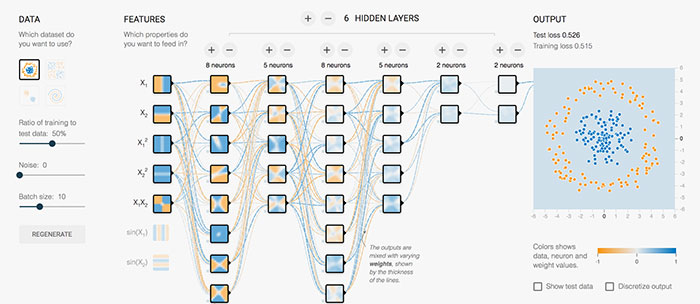

In practical terms, TensorFlow performs operations on multidimensional data arrays that resemble mathematical tensors. If you think of the ways that neural networks can be represented as 3D graphs, you can visualize the way arrays feed this type of network. A fun activity to try is to run a sample training set through the TensorFlow playground to visualize the ebb and flow of data through the vectors.

Why would I want to learn about it?

TensorFlow is an up and coming library that is backed by Google and that is quickly spawning very interesting projects. While it’s great to have access to pre-trained models with APIs such as those offered by Clarifai and Google, it’s important, as you progress in machine learning, to be able to dig deeper and train your own data locally. In addition, it’s important to find a community of programmers, data scientists, and mathematicians who are able to gather together around a project to create a vibrant ecosystem.

A group that is particularly interesting to me is the one creating the Magenta project, powered by TensorFlow. Magenta is a library that is using machine learning to create compelling art and music. Try drawing with a machine:

Or, listen to music generated by Magenta. If you think decent music can’t be machine generated, think again - the samples on this web site are quite moving - composed by machines and performed by humans.

Tools to use with TensorFlow

One of the main challenges in getting started with TensorFlow is that there is a high barrier to entry - even basic understanding of terminology is hampered if the user begins with no base knowledge of machine learning.

I strongly recommend enrolling in, and completing to the best of your ability, the 11-week classic Coursera course by Andrew Ng, formerly of Baidu and now at Stanford. This course will give you a grasp of the complexities of this field and an idea of the vocabulary that you need to understand to become conversant in machine learning. Enhance your experience of this course by listening to this excellent podcast, which goes over some of the same material at a higher level; it works to reinforce your coursework.

Then, when you are ready to dive into TensorFlow, head over to tensorflow.org and click ‘Get Started’. I found that a great way to get excited about TensorFlow is by doing the codelabs, and a good way to dive in deeper, before really digging into the API, is to go through the MNIST tutorial for beginners. So, a good learning path would be:

- Read this article about the basics of TensorFlow’s mechanics

- Work through the excellent TensorFlow For Poets codelab. This tutorial shows you how to train an image classifier that can recognize various types of flows. This is making basic use of a classic dataset that you’ll see elsewhere in machine learning tutorials.

- Ensure that Python is installed, and then work through the MNIST tutorial by opening a Python terminal and copying and pasting each line, reading the tutorial as you go. You’ll be exposed to many machine learning basics and see how TensorFlow abstracts them away.

- Walk through these tutorials, especially, to start with, the first one on image recognition.

Special news for the mobile developer! TensorFlow Mobile, with machine learning running on iOS and Android devices via imported models, is already in process of being built. Take a look at this project and join us on NativeScript Community Slack in the #tensorflow channel if you would like to help us build a plugin to leverage TensorFlow more easily in NativeScript apps!

More Learning Resources

Installing TensorFlow on your local computer can be tedious and confusing. After several tries, I ended up with a functioning installation using Pip3 after having previously installed it as a Docker image. A new project, however, called MachineLabs.ai, is in beta and is very promising to help users avoid local installations. It consists of Docker images where you can run your work. If you have problems with your installation, take a look at MachineLabs.

There are many onboarding paths available for TensorFlow, depending on your preferred way of learning.

- Awesome TensorFlow - a library

- Books

- Kaggle.com

- A community of data scientists with many models free to download and interesting code challenges and contests to deepen your knowledge.

- Deeplearning.ai

- For those who really want to dive deep, this is a new series of five courses just released by Andrew Ng on Coursera, all about machine learning

- Courses

- Andrew Ng’s classic Coursera course, the first stop for anyone diving into this field. Complete it and get a badge on LinkedIn!

- Effective TensorFlow

- A series of articles and tutorials

- Podcasts and videos

- Slacks and Google Groups

- tensorflow-user-group.slack.com

- tensorflowtalk.slack.com invite

- Google Group

- Don’t forget your local user groups and AI meetups

What are you waiting for?

It’s not a trivial mind shift to jump into machine learning. The one big takeaway from Andrew Ng’s course is that you shouldn’t simply throw a neural network at a problem and walk away. It takes some comprehension of the solutions that the various types of machine learning algorithms can bring to a system to fully utilize them properly. While you are learning, however, leveraging TensorFlow to tackle these tough problems by abstracting some of their complexity away can only help you. Watch this space for more interesting things we're doing with TensorFlow and NativeScript, making our mobile apps a little smarter. Best of luck, and tell me what you are building!

Jen Looper

Jen Looper is a Developer Advocate for Telerik products at Progress. She is a web and mobile developer and founder of Ladeez First Media which is a small indie mobile development studio. In her spare time, she is a dancer, teacher and multiculturalist who is always learning.