Adding Audio to Web Apps

Most web applications are strangely silent. Sure, there was a time when some sites would overwhelm you with audio during their awesome Flash intro - or even worse, play MIDI music - but there are actually useful and valid reasons to add audio to an application.

In fact, desktop applications and mobile phone apps tend to have some degree of audio feedback. Sure, some have too much (Facebook, for instance, beeps and clicks at me far more than is helpful, in my opinion), but, when used properly, audio can be a good way to enhance UI interactions.

In this article, we'll look at some ideas for incorporating simple audio-enhanced UI interactions to an application. We'll examine a few potential use cases involving buttons, sliders and notifications.

Browser Support

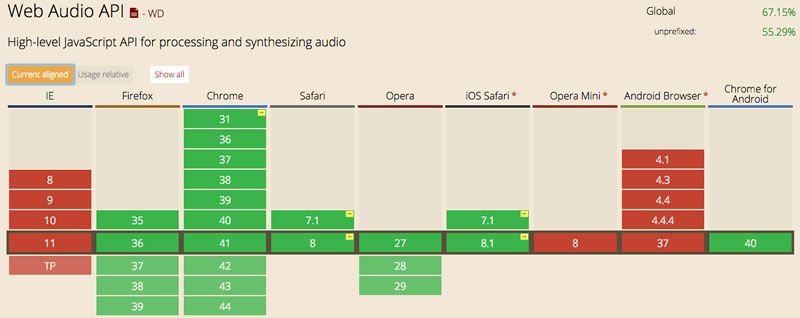

Before we continue, I should note that there are some limitation to support for the Web Audio API. It is supported in most browsers, though notably not in Internet Explorer. In Safari, it works using the webkit prefix. On mobile, it doesn't work in the Android browser (though it does in Chrome for mobile) and also not in Opera or IE mobile. Again, it works in Safari on iOS with a prefix. You can get more details on MDN or Can I Use (compatibility chart below).

The Blip Library

In a number of the examples below, I chose to use the Blip library rather than directly use the Web Audio API. The Web Audio API is powerful, but it can also be quite complex - creating contexts and connecting nodes can be confusing, especially if you don't have any background in audio.

However, Blip makes it very easy to load, play and even modify the playback of audio samples. Let's look at the basics of how it works before we go further.

First, you load the file asynchronously:

blip.sampleLoader()

.samples({'sound': 'path/to/my/sound.wav'})

.done(audioLoaded)

.load();The done method assigns a callback that will be triggered when the audio file has finished loading. Within the callback, we assign the audio file to a clip, which gives us methods to play and stop an audio sample. Notice that the sample name (i.e. sound in this case) is the same as one given above when loading.

function audioLoaded() {

mysound = blip.clip().sample('sound');

}Now that the sound is loaded, we can trigger it to play very easily. The 0 indicates that we want it to play right away, rather than start with a delay.

mysound.play(0);There are many more complex things you can do with audio and the Blip library, but for the purposes of most UI interactions, these basics generally suffice.

Additional examples in this article rely on audio synthesis directly using the Web Audio API. I'm not going to go into detail about how all the pieces of the Web Audio API work, simply show how it's done. If you want a deeper understanding of how the Web Audio API works, O'Reilly offers a free ebook titled Web Audio API by Boris Smus that covers all the aspects in great detail.

Adding Audio to Buttons

Most of the time, adding audio to a button press would be superfluous and, if overused, could get very annoying. However, there are exceptions to this.

For example, an application could have a a button that could change a value or perform some kind of function when it is pressed multiple times. In a scenario like this, it might be useful to add another form of feedback to let the user that the button press has been completed (helping to avoid click-happy users from inadvertently doing something they didn't intend). Another potential scenario would be a button that increments or decrements a value. In this case, you might want to trigger an audio response when the value has reached its limit.

These are just two potential ideas, but the point is that there are cases where adding audio to buttons could actually enhance interactions. Let's look at how this can be done.

Using an Audio File

In the first example, we have a simple button:

<button id="clickme" disabled="true">Click Me!</button>Assuming we have loaded Blip, the code to make this button trigger a .wav file is easy.

var clickme = document.getElementById("clickme");

clickme.addEventListener('click',clickHandler);

blip.sampleLoader()

.samples({'button': 'audio/button.wav'})

.done(audioLoaded)

.load();

function audioLoaded() {

buttonClick = blip.clip().sample('button');

clickme.disabled = false;

}

function clickHandler(e) {

playTone();

// do other stuff

}

function playTone() {

buttonClick.play(0);

}First, we create a reference to the button. It is disabled by default since we want to ensure that the audio is loaded before it is enabled (though, if the audio file is small, this typically should happen very quickly). We also assign the button a click handler.

Next, we tell Blip to load the sample audio file and to trigger a callback when the file is loaded. Inside this callback, we assign the sample to a clip instance (named buttonClick) and then enable the button.

Finally, within our click handler, we simply call the function to play back the audio clip. That's it!

View the full code for this example on GitHub.

Using Audio Synthesis

The Web Audio API offers the ability to generate synthesized sound directly from the browser, without loading any sort of audio file. While the code to achieve this is more complex, you can have fine-tuned control over the sound you create (using just a little knowledge of audio synthesis) with the benefit that your user doesn't have to download anything.

Assuming the same button as in the previous example, here's the code required to accomplish this:

var ctx = new AudioContext(),

clickme = document.getElementById('clickme');

clickme.addEventListener('click',clickHandler);

function clickHandler(e) {

playBeep();

}

function playBeep() {

var o = ctx.createOscillator(), g = ctx.createGain(), playLength = 0.06;

o.type = 'sine';

o.frequency.value = 261.63;

o.start(ctx.currentTime);

o.stop(ctx.currentTime + playLength);

g.gain.value = 0.5;

g.gain.setValueAtTime(1,ctx.currentTime + playLength - 0.02);

g.gain.linearRampToValueAtTime(0,ctx.currentTime + playLength);

o.connect(g);

g.connect(ctx.destination);

}The AudioContext that you see created initially is necessary to do anything with the Web Audio API. The prior example had it too, but it was abstracted away by the Blip library. In this case, our click handler calls the playBeep method.

Within the playBeep method, we create an oscillator, which generates the synthesized sound, and a gain, which controls the amplitude (essentially the volume of the sound). I've chosen to use a sine wave with a frequency of 261.63 (which, for you music nerds, corresponds to C4).

Next, I tell the Web Audio API to start the sound right now and stop it once the specified length has been hit (0.06 seconds in this case). This doesn't actually cause the sound to play yet, as I have not hooked the sound to the destination (I told you the Web Audio API could be tricky).

Before doing that though, I set the gain to half, where the minimum gain is 0 and the maximum is 1. I also set the sound to fade very quickly. This helps prevent the clipping sound you may get when abruptly ending playback of a note. Finally, I connect the oscillator to the gain and the gain to the audio context, which causes the sound to play.

Fortunately all of this happens so quickly that the sound appears to play instantaneously. Try it for yourself below:

View the full code for this example on GitHub.

Adding Audio to Sliders

A slider, to me, presents a slightly more obvious use case for audio feedback than a button since they are built for raising and lowering a value. In certain scenarios, it can be useful to provide the user with some audio feedback that further indicates the change in value. Let's explore a couple of possibilities. For this example, I'll be using the slider from Kendo UI Core (the free and open source distribution of Kendo UI).

Using an Audio File

For example, you may want to have a ping tone that changes pitch up and/or down to indicate a value being raised or lowered via the slider. Another scenario might be a slider that changes volume within a widget of some sort, in which case we'd have the ping tone change in volume as well to demonstrate the new level being set.

The first thing we need is a slider for our UI.

<input id="slider" value="0" />Assuming that we've loaded the Kendo UI JavaScript and CSS, jQuery and Blip prerequisites, we simply instantiate the slider and add listeners that are triggered as the value is changed by the user.

Changing the Tone

Let's look at the code to change the tone first.

var ping, lastPlayedRate = 0,

slider = $("#slider").kendoSlider({

increaseButtonTitle: "Right",

decreaseButtonTitle: "Left",

min: -10,

max: 10,

smallStep: 2,

largeStep: 1,

slide: sliderOnSlide,

change: sliderOnChange,

enable: false

}).data("kendoSlider");

blip.sampleLoader()

.samples({'ping': 'audio/ping.wav'})

.done(audioLoaded)

.load();

function audioLoaded() {

ping = blip.clip().sample('ping');

slider.enable(true);

}

function sliderOnSlide(e) {

pingFreq(e)

}

function sliderOnChange(e) {

pingFreq(e)

}

function pingFreq(e) {

var rate = 1 + e.value/10;

if (lastPlayedRate != rate)

ping.play(0,{rate:rate});

lastPlayedRate = rate;

}We're creating a slider using Kendo UI that has values from -10 to 10. We've also assigned event handlers for the slide and change events. This is because we want the new tone to play regardless of whether they slide or click on the slider to change the value.

As in the prior examples using Blip, our UI that is dependent on the audio file isn't enabled until Blip has loaded the corresponding audio file. Both the change and slide handlers call the pingFreq method, which sets a rate based upon the value of the slider (you could also change the rate based upon the change in the value rather than the actual value itself). The rate is what determines how fast the audio file is played by Blip. A faster or slower rate will change the tone of the sound.

When you change the value of the slider by sliding (as opposed to clicking on a value), the slide event is triggered on every tick on the way up or down. However, the change event is triggered when you stop sliding. This means that both events are triggered when you stop sliding. Thus, we need to check to see if the current rate is the same as the last rate played, thereby preventing the same tone from being triggered twice by both events.

View the full code for this example on GitHub.

Changing the Volume

The code to change the volume is very similar.

var ping, lastPlayedGain = 0,

slider = $("#slider").kendoSlider({

change: sliderOnChange,

slide: sliderOnSlide,

min: 0,

max: 10,

smallStep: 1,

largeStep: 2,

tickPlacement: "both",

enable: false

}).data("kendoSlider");

blip.sampleLoader()

.samples({'ping': 'audio/ping.wav'})

.done(audioLoaded)

.load();

function audioLoaded() {

ping = blip.clip().sample('ping');

slider.enable(true);

}

function sliderOnSlide(e) {

pingGain(e)

}

function sliderOnChange(e) {

pingGain(e)

}

function pingGain(e) {

var gain;

if (e.value > 0)

gain = e.value/10;

else

gain = 0;

if (lastPlayedGain != gain)

ping.play(0,{gain:gain});

lastPlayedGain = gain;

}The primary difference here is the pingGain method. Since gain is set as a value between 0 and 1, we divide the value on our volume slider (0 through 10) by 10 - assuming the value isn't already 0. If we didn't just play the tone at the same volume, we play it, passing in the appropriate gain.

View the full code for this example on GitHub.

Using Audio Synthesis

Let's look at the same examples, but this time use oscillators to create the tone.

var ctx = new AudioContext(), lastPlayedRate = 0, freq = 261.63;

var slider = $("#slider").kendoSlider({

increaseButtonTitle: "Right",

decreaseButtonTitle: "Left",

min: -10,

max: 10,

smallStep: 2,

largeStep: 1,

slide: sliderOnSlide,

change: sliderOnChange

}).data("kendoSlider");

function sliderOnSlide(e) {

pingFreq(e)

}

function sliderOnChange(e) {

pingFreq(e)

}

function pingFreq(e) {

var rate = freq + (e.value * 32.03);

if (lastPlayedRate != rate) {

playOsc('triangle',rate,0.5,0.05)

}

lastPlayedRate = rate;

}

function playOsc(type,freq,vol,dur) {

var o = ctx.createOscillator(), g = ctx.createGain();

o.type = type;

o.frequency.value = freq;

o.start(ctx.currentTime);

o.stop(ctx.currentTime + dur);

g.gain.value = vol;

g.gain.setValueAtTime(1,ctx.currentTime + dur - 0.01);

g.gain.linearRampToValueAtTime(0,ctx.currentTime + dur);

o.connect(g);

g.connect(ctx.destination);

}Much of this should look familiar by now based upon all the prior examples. We set the default frequency to the equivalent of C4 again and change that depending on the value of the slider (why by 32.03? Because that's the difference between C4 and D4, though the frequency differences between notes is not actually uniform).

The playOsc method handles creating and playing the blip sound. This works nicely because we can reuse this method to change the volume of the blip in the subsequent example.

Try it out:

View the full code for this example on GitHub.

Changing the Volume

The code for this example is nearly identical to the prior, the primary difference being that we pass the same frequency every time, just a different volume value to the playOsc method.

var ctx = new AudioContext(), lastPlayedGain = 0, freq = 261.63;

var rangeSlider = $("#slider").kendoSlider({

change: sliderOnChange,

slide: sliderOnSlide,

min: 0,

max: 10,

smallStep: 1,

largeStep: 2,

tickPlacement: "both"

}).data("kendoSlider");

function sliderOnSlide(e) {

pingGain(e)

}

function sliderOnChange(e) {

pingGain(e)

}

function pingGain(e) {

var gain, rate = 523.25;

if (e.value > 0)

gain = e.value/10;

else

gain = 0;

if (lastPlayedGain != gain)

playOsc('triangle',rate,gain,0.05);

lastPlayedGain = gain;

}

function playOsc(type,freq,vol,dur) {

var o = ctx.createOscillator(), g = ctx.createGain();

o.type = type;

o.frequency.value = freq;

o.start(ctx.currentTime);

o.stop(ctx.currentTime + dur);

g.gain.value = vol;

g.gain.setValueAtTime(1,ctx.currentTime + dur - 0.01);

g.gain.linearRampToValueAtTime(0,ctx.currentTime + dur);

o.connect(g);

g.connect(ctx.destination);

}You can try this out yourself below.

View the full code for this example on GitHub.

Adding Audio to Notifications

Notifications are by far the most obvious use case for adding audio to your applications. There are countless types of notifications where an audio indication might be helpful, from a new message arriving, to an error on a form, or even a basic "you're request had completed" type of notification. Adding sound to notifications ensures that something important isn't overlooked just because the user isn't looking at the screen.

As with the prior examples, I'll be using the notifications from Kendo UI Core (the free and open source distribution of Kendo UI).

Using an Audio File

Let's first look at a simple example using just a basic notification that is triggered by an event on the page.

Basic Notification

The UI of our example just includes a span to contain the notification and a button to trigger it.

<span id="popupNotification" style="display:none"></span>

<button id="show">Show Notification</button>As always, let's assume we have loaded Kendo UI, jQuery and Blip.

var ping,

popupNotification = $("#popupNotification").kendoNotification({show: onPopup}).data("kendoNotification"),

show = $('#show').kendoButton({enable: false, click: showPopup}).data("kendoButton");

blip.sampleLoader()

.samples({

'ping': 'audio/ping.wav'

})

.done(audioLoaded)

.load();

function audioLoaded() {

ping = blip.clip().sample('ping');

show.enable(true);

}

function showPopup() {

popupNotification.show("Something important happened");

}

function onPopup(e) {

ping.play(0);

}We initialize the popup notification and assign an event handler for the show event. Within this event handler, we play our sample tone. Although the button press triggers the notification, it is actually the notification displaying that causes the tone to play. This means that regardless of how the notification gets triggered from within the application, it will properly play the audio.

View the full code for this example on GitHub.

A More Complex Notification

A more common use case might be where an application asynchronously calls the server (for example, to check for new messages) and then shows a notification if and when a response is received.

Our UI is essentially the same:

<span id="emailNotification" style="display:none"></span>

<button id="messages">Check for Messages</button>One difference though, in this case we are going to add a template to the notification display, so that it looks more like an email is being received.

<script id="emailTemplate" type="text/x-kendo-template">

<div class="new-mail">

<img src="images/envelope.png" />

<h3>#= title #</h3>

<p>From: #= from #</p>

</div>

</script>When the "Check for Messages" button is pressed, we're not going to directly call the notification. Rather, we'll make a call out to the server to check for new messages, via the checkMessages method in the code below.

var ping,

emailNotification = $("#emailNotification").kendoNotification(

{

show: onEmailReceived,

templates: [{

type: "email",

template: $("#emailTemplate").html()

}]

}).data("kendoNotification"),

messages = $('#messages').kendoButton({enable: false, click: checkMessages}).data("kendoButton");

blip.sampleLoader()

.samples({

'notification': 'audio/notification.wav'

})

.done(audioLoaded)

.load();

function audioLoaded() {

notification = blip.clip().sample('notification');

messages.enable(true);

}

function checkMessages(e) {

$.getJSON( "js/response.js", function(data) {

emailNotification.show({

title: data.title,

from: data.from,

}, "email");

});

}

function onEmailReceived(e) {

notification.play(0,{rate:1});

}The checkMessages method uses jQuery to asynchronously check the server for new messages and assigns a callback handler for when a successful response is received. This callback handler displays the notification using the template that was assigned to it and passing in the values received in the JSON response.

The onEmailReceived method was assigned to the show event for the notification. When the response triggers the notification, the sound is then played, letting our user know that a new message has arrived.

View the full code for this example on GitHub.

Wrapping Up

Obviously the notifications sample could also be redone using oscillators in the Web Audio API. However, most of the time, an email notification sound is more than just a simple blip, which would make it slightly more complicated to generate.

If you'd like the full code for the samples in this article (and a couple of other fun ones as well), you can find them on GitHub.

Hopefully this article has given you some ideas for how you might usefully and tastefully incorporate audio into your web application using either the Blip library or straight Web Audio API and oscillators. These are only a handful of valid use cases for audio though and I'd love to hear more ideas people have for others (and I'll do my best to incorporate additional samples into my repository as well).

Brian Rinaldi

Brian Rinaldi is a Developer Advocate at Progress focused on the Kinvey mobile backend as a service. You can follow Brian via @remotesynth on Twitter.